I'm going to go really out of my comfort zone here. I'm just not going to stand here in a monotone and disenaged voice like I normally do and tell you about this thing, how this thing works. What I really want to talk about is really broad, and a little all over the place. I'm going to talk about web archiving, what I've learned, what I'm still struggling with, some projects I've been working on, and power, ethics, and responsibility. Hopefully I'll get there in my own little round about way, and maybe it will be coherent.

The revolution will be preserved

Nick Ruest (@ruebot), York University

I have to start somewhere, so I'll start here. Almost a year ago, I was in a meeting and was presented with this problem. YFile, a daily university newspaper (used to be a paper now a website) had been taken over my marketing a while back, and they deleted all their back content. They are an official university publication, so an official university record, and eventual end up in archives, so it will eventually be our problem; the library's problems. Plainly put, we live in a reality where official records are born and disseminated via the Internet. Many institutions have a strategy in place for transferring official university records that are print or tactile to university archives, but not much exists strategy-wise for websites. So, I naively decided to tackle it.

I tend to just do things. I don't ask permission. I apologize later if i have to (like taking down the YFile server). If i make mistakes, good (crawling incorrectly, not knowing to use page requisites, mirror doesn't grab everything!)! Then I've learned something. What i am doing isn't new, but then again it knda is. It is a really weird place. I need to crawl a website or everyday. The internet archive comes around whenever it does. There is no way to give the Internet Archive/Wayback machine a whole bunch of warc files, and I'm not going to pay for Archive-It.

That won't work for me at all when I have some idea how to do it all myself. So, what is the problem? I need to capture and preserve a website everyday. I want to provide the best material to a researcher. I want to keep a fine eye on preservation, but not be a digital pack rat, and need to constantly keep the librarian and archivist in be pleased. Which is always Item vs. collection debate and which of those gets the most attention.

How easy is it to grab a website? Pretty damn easy if you're using at least wget 1.14 which has warc support.

$ wget --warc-file=foo http://yfile.news.yorku.ca/

Opening WARC file ‘foo.warc.gz’.

--2013-05-08 10:17:49-- http://yfile.news.yorku.ca/

Resolving yfile.news.yorku.ca (yfile.news.yorku.ca)... 130.63.173.84

Connecting to yfile.news.yorku.ca (yfile.news.yorku.ca)|130.63.173.84|:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: unspecified [text/html]

Saving to: ‘index.html’

0K .......... .......... .......... . 180K=0.2s

2013-05-08 10:17:49 (180 KB/s) - ‘index.html’ saved [32084]

How many people here know what a warc is? Warc stands for web archive. It is an iso standard. It is basically a file (that can get massive very quickly) that aggregates resources you request into a single file along with crawl metadata, checksums. PROVENANCE! This is what the beginning of a warc file looks like.

this is what the beginning of a warc file looks like.

WARC/1.0

WARC-Type: warcinfo

Content-Type: application/warc-fields

WARC-Date: 2013-05-08T14:17:49Z

WARC-Record-ID: <urn:uuid:d3f6dce5-72f5-4630-8d80-c2d613b5f323>

WARC-Filename: foo.warc.gz

WARC-Block-Digest: sha1:TDXOONUN4LTIM7KZ2NJTUALCLC3QHRTF

Content-Length: 227

software: Wget/1.14 (linux-gnu)

format: WARC File Format 1.0

conformsTo: http://bibnum.bnf.fr/WARC/WARC_ISO_28500_version1_latestdraft.pdf

robots: classic

wget-arguments: "--warc-file=foo" "http://yfile.news.yorku.ca/"

and here is a selection from the arctual archive portion. That is my brief crash course on warc. We can talk about it more later if you have questions. I need to keep moving along :-)

HTTP/1.1 200 OK

Date: Wed, 08 May 2013 14:17:49 GMT

Server: Apache/2.2.14 (Ubuntu)

X-Powered-By: PHP/5.3.2-1ubuntu4.18

Set-Cookie: spo_171_fa=0125371a1f04cde1ecfd0a10871f4405; expires=Wed, 08-May-2013 14:47:49 GMT; path=/

X-Pingback: http://yfile.news.yorku.ca/xmlrpc.php

Keep-Alive: timeout=15, max=100

Connection: Keep-Alive

Transfer-Encoding: chunked

Content-Type: text/html; charset=UTF-8

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN" "http://www.w3.org/TR/xhtml1/DTD/xhtml1-transitional.dtd">

<html xmlns="http://www.w3.org/1999/xhtml">

<head>

<title>YFile</title>

<meta http-equiv="Content-Type" content="text/html; charset=UTF-8" />

So, warcs are a little weird to deal with on their own. You can disseminate them with Wayback Machine, and I assume nobody but a few people on this planet want to see a page full of just warc files. Building something browsable takes a little bit more work. So, I decided to snag a pdf and screenshot of the page or frontpage of the site that I am grabbing with wkhtmltopdf and image. Then I toss this all in a single bash script, and give it to cron.

So this is what I have come up with. This is how I capture and preserve a website. (The pdf/image came from Peter Binkley - X virtual framebuffer is an X11 server that performs all graphical operations in memory, not showing any screen output.)

# !/bin/bash

DATE=`date +"%Y_%m_%d"`

cd /mnt/diy/DIY/web-archiving/warc

mkdir YFILE_$DATE

cd YFILE_$DATE

xvfb-run -a -s "-screen 0 1280x1024x24" wkhtmltopdf --use-xserver --dpi 200 --page-size Letter http://yfile.news.yorku.ca/ YFILE_$DATE.pdf

xvfb-run -a -s "-screen 0 1280x1024x24" wkhtmltoimage --use-xserver http://yfile.news.yorku.ca/ temp.png

pngcrush temp.png YFILE_$DATE.png

rm temp.png

/usr/local/bin/wget --page-requisites --mirror --warc-file=YFILE_$DATE --wait=3 http://yfile.news.yorku.ca/

cp YFILE_$DATE.warc.gz /mnt/diy/DIY/web-archiving/wayback

cd ..

/usr/bin/python /usr/local/bin/bagit.py --processes 4 --contact-name 'Nick Ruest' --contact-email 'ruestn@yorku.ca' --source-organization 'York University' --organization-address '4700 Keele Street Toronto, Ontario M3J 1P3 Canada' --contact-phone '+1 (416)736-2100 x 33235' --external-description 'YFile daily web archive' YFILE_$DATE

zip -r YFILE_$DATE.zip YFILE_$DATE

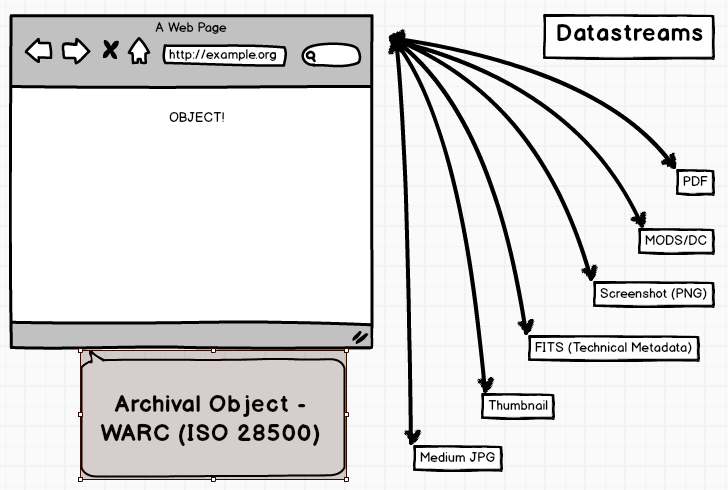

rm -rf YFILE_$DATEI've been running that script on cron since last October. Now what? Like I said before, nobody wants to see a page full of warc files. So, I started working with the tools and platforms that I know. In this case, Drupal, Islandora, and Fedora Commons, and created a solution pack. Solution pack in Islandora parlance, is a Drupal module that integrates with the main Islandora module and Tuque API to deposit, create derivatives, and interact with a given type of object. So, we have solution packs for Audio, Video, Large Images, Images, PDFs, and paged content.

Now what?

What does it do? Adds all required Fedora objects to allow users to ingest, create derivatives, and retrieve web archives through the Islandora interface. Content Models, Data Stream Composite Models, forms, and collection policies. The current iteration of the module allows on to batch ingest a bunch of objects for a given collection, and it will create all of the derivatives (Thumbnail and display image), and index any provided metadata in solr. I'm working on indexing the actual warcs in solr for search those as well. show a couple of screenshots.

Web ARChive Solution Pack

Batch-ingest.zip

|-- foo.warc

|-- foo.pdf

|-- foo.png

|-- foo.xml

collection object (parent)

collection object (object/site)

object (crawl)

Here is what my object looks like. Explain it a bit.

TODO

- Solr indexing (individual warcs)

- Wayback Machine integration

- Automatic harvesting

- Automatic metadata harvesting (???)

now for something different.

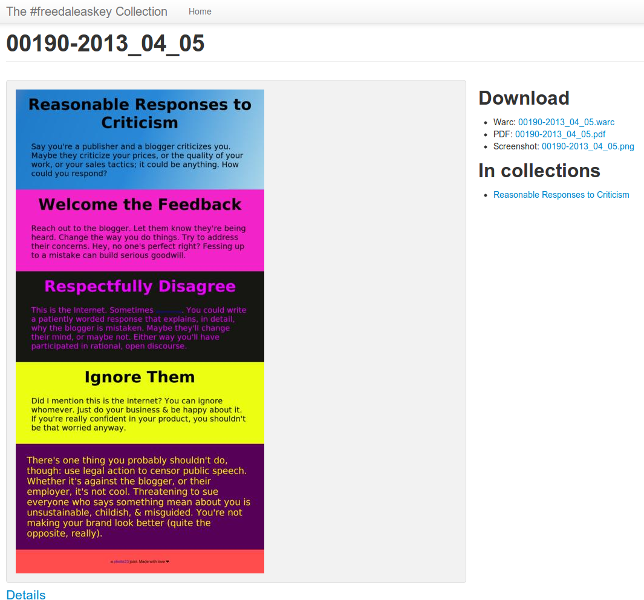

As I was working on this web archiving stuff, and slowly building a Web ARChive solution pack for Islandora, this guy happened. Dale is my former boss, and a great guy. Through all of the chaos and bullshit at McMaster, we were able to rise above it and forge a great relationship. So, when he put out a call for help, I jumped up immediately and volunteered to take care of web archiving.

I started with the initial list of sites that John Dupuis pulled together, and crawled it a single time, all by hand! Talk about a lesson learned! Then I thought about it a bit, and talked to Dale, and figured that we should just capture these everyday. Create a record, an archive, of this still unfolding story. To see if anything is taken down. How comments unfold over time. How associations and organizations don't understand that COOL URLS DO NOT CHANGE! But, really just to document this. Because we can. We have the power.

So, what I did is start yet another little pet project. The wonderful Sarah Shujah organized a hackfast during reading week, and I just hunkered down and tossed together a shell script using what I learned from the YFile stuff that just parsed a text file list of all the sites John Dupuis and I could find about this story.

arxivdaleascii is just shell script like the one I created for grabbing YFile, except it iterates line-by-line over a text file, and only grabs a single page. Not an entire site, since 99% of these are blog posts. This runs everynight at 1am, and has been running since Feb. 20.

arxivdaleascii

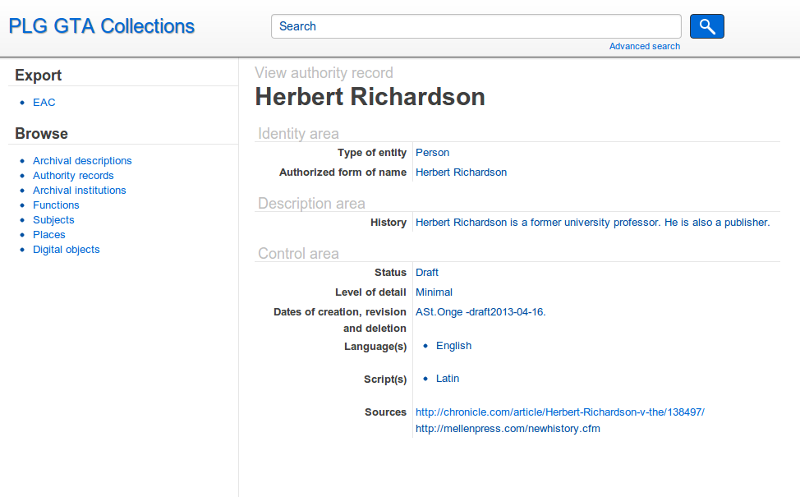

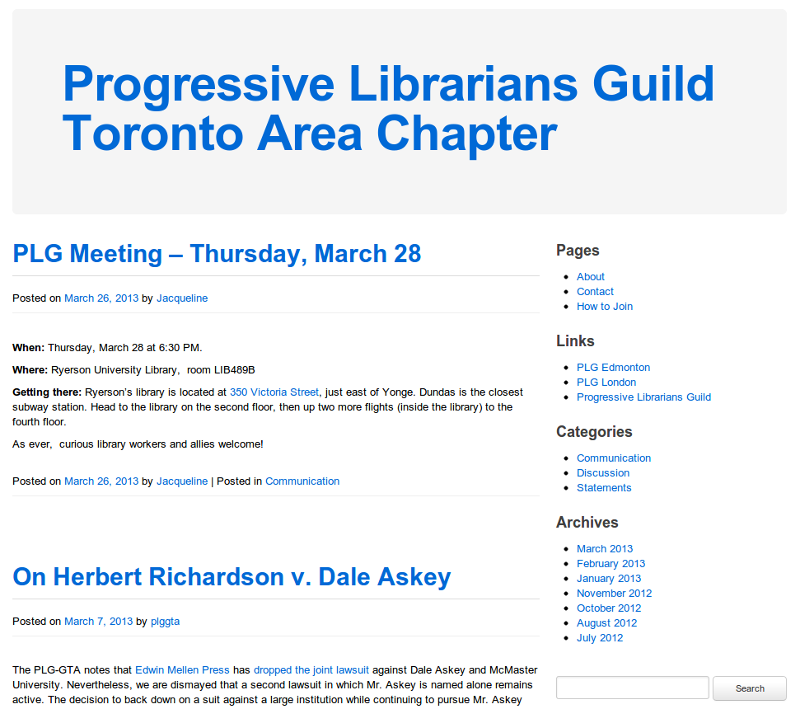

Then one day at a PLG meeting, I proposed that we do something with this collection. Make it available! Then with the wonder assistance of Anna St. Onge & Jacqueline Whyte Appleby we have been slowly organizing and describing this collection using the solution pack I created for Islandora and AtoM (access to memory) for creating archival descriptions.

Anna has been doing the heavy lifting here as far as content. Creating authority records, and working on descriptions. This is where a lot of new problems and old problems popup, where should something live and how to pull something from something else if it lives somewere else.

Now the stuff I'm not good at. I do activism. I do social justice. But, I'm horrible at explaining the why. But, I'll give it shot.

Hopefully I can do better than this guy. Whether you have realized it or not, we have a significant amount of power in our positions and our technical know-how. Some of us - wield a massive amount of power in our positions. We have control or access to storage and computing infrastructure and can do some pretty great things. We can download every episode of Game of Thrones with said infrastructure, or we can be an Archiveteam Warrior and help capture and preserve Yahoo! Messages.

Power

Ethics

Responsibility

I really take to heart the social justice and social responsibility, and I believe it goes hand-in-hand with the ethics of our profession. This is why I helped found a local PLG chapter with a bunch of other amazing library workers. We are Toronto-area library workers who are concerned with social justice and equality issues, charged with the stewardship of knowledge, championing open access to information, and preserving common space. We are interested in issues of freedom of expression, attacks on Canadian heritage, freedom of information, privacy, censorship, copyright, equitable access to information, the fostering of critical information literacy, and the broad social implications of the commodification of information and increasing corporate influence on libraries. As library workers, we recognize that the increasing lack of job security, de-professionalization, and casualization of our profession threatens the “free public sphere which makes an independent democratic civil society possible.”

We have to standup and protect the values and ethics of our profession. We have to be librarians and archivists and librarian workers in our own right. The libraries and associations we work and are appart of don't always have the interests of librarians, archivists, and library workers in the best mind. So, hold it sacred and do amazing things. There is something of value and fantastic that you can contribute to whether it be volunteering somewhere, running archive warrior on a spare machine, or arranging and describing a collection for an under represented group.

...

We sit squarely in the social conscience of the information world. We have to ask ourselves, what makes a library? Is it a room full of books? A delivery mechanism for commercial online products? No. It is the way the library workers animate our collections and critique the commercial entities where necessary. It is the attention to literacy and social values that differentiates librarianship from most other kinds of information professions. Dusted off, repolished and reframed for the 21st century, this attentiveness will be our calling card, our hallmark, our badge of honour.

Somebody always says it better

:-)

Questions?